The era of software that merely stores and retrieves data is fading. Modern applications are expected to reason, synthesize, and interact. Building a SaaS product with large language models offers a path to creating software that solves problems previously requiring human intervention.

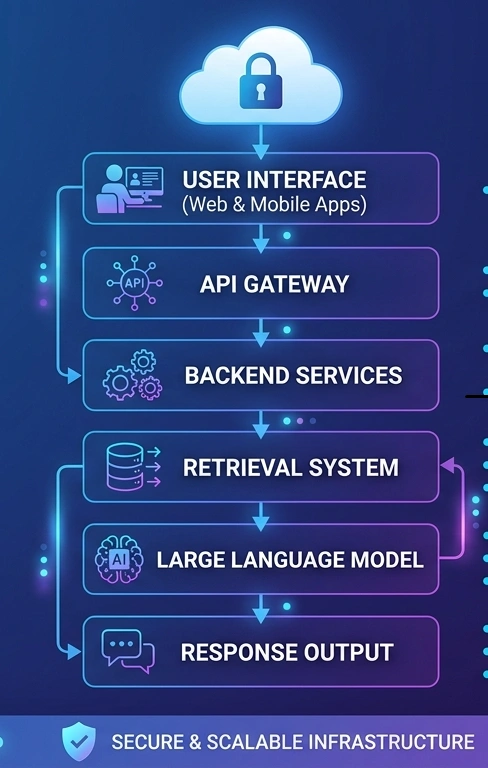

For developers, the shift from traditional logic to probabilistic AI outputs requires a new architectural mindset. Building an enterprise LLM solution is about creating a bridge between raw intelligence and secure, scalable business workflows.

The Shift to Generative SaaS

Software used to be a set of rigid rules. If a user clicked button A, the system performed action B. With Large Language Models (LLMs), we are now able to construct systems that read intent. This change makes it possible to write tools that write reports, audit financial statements, or create an architectural blueprint out of a simple description.

Developers and founders are shifting towards these models since they lower the time to market for complex features. The tasks which previously had to be performed with special machine learning teams, now can be done via API calls and strategic data management.

Why Enterprise LLM Solutions are the Future

Consumer AI technologies are popular, but the true value is in business-grade apps. There are three main ways that an Enterprise LLM solution is different from a regular chatbot:

- Data Sovereignty: Companies need to be sure that their data isn’t being utilized to build public models.

- Predictable Costs: To keep profit margins, you need to manage “tokens,” which are the units of text that the model processes as you scale an LLM-based product.

- Contextual Accuracy: The AI has to know the specific language, history, and goals of a certain business.

If you pay attention to these things, you can make a SaaS application with large language models that big businesses will want to use, not just regular people.

The Technical Framework: From API to Architecture

Building a production-ready application requires more than a single prompt. You must manage the flow of information between your user, your database, and the AI model.

Selecting the Right Model

Not every task needs the most expensive model available. You might use a smaller, faster model for basic text categorization and save the high-reasoning models for complex data analysis.

- Proprietary Models: These offer the highest performance with minimal setup.

- Open-Source Models: These allow you to host the model on your own servers, providing total control over data privacy.

Retrieval-Augmented Generation (RAG)

An LLM has a cutoff date for its knowledge. To make your SaaS product with large language models useful for real work, it needs access to your users’ specific data. This is where RAG comes in.

Instead of sending a user’s question directly to the AI, your system first searches a “Vector Database.” This database holds snippets of the user’s own documents. The system finds the most relevant information and sends it to the LLM as a “cheat sheet.” This ensures the AI provides answers based on facts rather than guesses.

Scalable Infrastructure

Your backend must handle the latency associated with AI. Standard API requests might take milliseconds; an LLM response might take seconds. Implementing asynchronous processing and “streaming” responses where the text appears word-by-word is necessary for a good user experience.

Real-World Case Study: Automated Compliance SaaS

Consider a startup building a tool for healthcare providers to check if their documents meet legal standards.

- The Problem

Healthcare regulations change monthly. Manual audits are slow and expensive.

- The Solution

A SaaS product with large language models that scans internal documents against a database of the latest regulations.

- The Architecture

The system uses RAG to pull the latest legal text. The LLM then compares the internal document to the legal requirement and highlights missing sections.

- The Result

Audits that took weeks now take minutes, and the software becomes an Enterprise LLM solution that hospitals are willing to pay for on a recurring basis.

Managing the Risks of LLM Integration

Integrating AI brings unique challenges that traditional software avoids.

Avoiding “Hallucinations”

LLMs are designed to be helpful, which sometimes leads them to invent facts. To prevent this, developers must use strict “system prompts” that tell the AI: “Only answer based on the provided text. If you don’t know the answer, say so.”

Security and Privacy

When building a SaaS product with large language models, you must ensure that User A’s data never ends up in a prompt sent to User B. This requires a multi-tenant architecture in which data is strictly isolated before it reaches the vector database or the AI model.

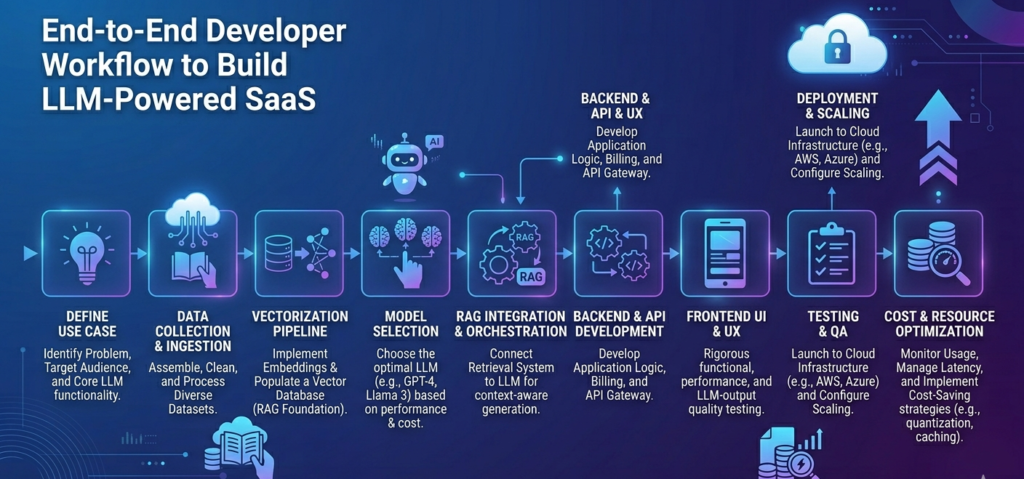

Actionable Steps for Developers

Follow this path if you’re ready to start:

- Define a Narrow Use Case: Do not create an overly general assistant. Target a particular industry, e.g., legal, medical, or engineering.

- Construct a Vector Pipeline: Construct a system to accept PDFs, text files, and spreadsheets and convert them into vectors (mathematical descriptions of meaning).

- Select an Orchestration Library: Select tools such as LangChain or LlamaIndex. These libraries represent the glue that binds your database to the AI model.

- Keep Track of Your Costs: Use dashboarding to track the number of tokens your users use. This enables you to price your SaaS right.

Moving from traditional software to AI-native apps is a big change in the way we address problems. You can make a SaaS product with large language models that gives its users real, long-term value by focusing on Enterprise LLM solutions and strong RAG architectures.

Leave a Reply